The Riemann Hypothesis

The Riemann hypothesis is one of the unsolved cornerstones of analytic number theory. Formulated by Bernhard Riemann in 1859, it states that all non-trivial zeros of the Riemann zeta function, denoted ζ(s), have a real part equal to 1/2.

In simpler terms, the Riemann hypothesis asserts that prime numbers, although they appear to be randomly distributed, follow a deep pattern described by the zeta function. This function has points where it equals zero, and the conjecture claims that all of them lie on the same line in the complex plane. Proving this would transform our understanding of prime numbers.

The Riemann zeta function is a mathematical function that in its simplest form is defined as the following infinite sum:

ζ(s) = ∑ (n = 1 to ∞) 1/nˢ

This infinite sum converges when the real part of s is greater than 1. Here, s is not an ordinary number but a complex number with both real and imaginary parts. Through a process called analytic continuation, the function can be extended to almost the entire complex plane.

A zero of a function is a value for which the function equals zero. In the case of the Riemann zeta function, these zeros fall into two categories. The trivial ones occur at negative even integers (−2, −4, −6, etc.) and are easy to identify. The non-trivial ones, far more enigmatic, lie in the strip of the complex plane between the lines Re(s) = 0 and Re(s) = 1. The Riemann hypothesis states that all these non-trivial zeros lie exactly on the critical line Re(s) = 1/2, which is the core of the still-unsolved problem.

The consequences go beyond number theory, with connections appearing in algebraic geometry, harmonic analysis, and dynamical systems. Although millions of zeros have been computationally verified on the critical line, a general proof does not yet exist. Solving this hypothesis would radically change our deep understanding of the patterns governing the sequence of prime numbers.

The Navier-Stokes Problem

The Navier-Stokes equations are fundamental in the study of fluid motion. They describe the evolution of a fluid’s velocity field as a function of time and space, taking into account factors such as viscosity, pressure, and external forces. In the equations, U is the velocity field, P is the pressure, ρ the density, μ the dynamic viscosity, t the time, and F the external forces per unit volume.

The symbol ∇ is called nabla or the differential operator. In the Navier-Stokes equations, it is used with different meanings depending on how it acts. ∇P is the gradient of pressure, indicating how pressure changes in space. U·∇U is the convective term, representing the change in velocity due to the fluid’s own motion. ∇²U is the Laplacian of velocity, measuring the diffusion or smoothing out of velocity due to viscosity.

This mathematical problem, formulated as one of the Millennium Prize problems, consists of proving whether these equations have smooth and unique solutions in three dimensions for suitable initial conditions. In this context, “smooth” means the solutions are differentiable and do not exhibit singularities, points where physical variables become infinite in finite time.

Although in two dimensions the existence of global smooth solutions has been proven, the three-dimensional case remains a mystery. The possible emergence of singularities raises doubts about the validity of the model in highly complex scenarios such as turbulent regimes. Despite its practical usefulness in simulations for aeronautics, oceanography, meteorology, and medicine, the theoretical foundation of the model remains incomplete.

The difficulty lies in the nonlinear nature of the convective term, which complicates the global analysis of the system. A positive resolution would establish mathematical rigor for one of the most widely used systems in applied sciences. On the other hand, proving the inevitable formation of singularities would indicate the need to modify the model in order to faithfully describe certain physical phenomena. In either case, the impact would be profound for our understanding of fluid dynamics.

The P versus NP Problem

The P versus NP problem is one of the great questions in computer science. It asks whether every problem whose solution can be checked quickly can also be solved quickly. Here, “quickly” means in polynomial time: the number of steps grows reasonably as the size of the input increases. The big question is whether P = NP.

A problem belongs to the class P if there exists an algorithm that solves it in polynomial time with respect to the input size. A problem is in NP if, given a candidate solution, it can be verified efficiently.

A classic example is the traveling salesman problem. Given a list of cities with their distances, one seeks the shortest route that visits each city exactly once. Verifying whether a proposed route is correct is easy, but finding the best one may require exponential time in the worst case.

The significance of this problem goes beyond theory. If it were proven that P = NP, it would mean that every problem for which an efficient verification exists could also be solved efficiently. This would have an immediate impact on areas such as cryptography, where security depends on problems that are hard to solve but easy to verify, such as factoring large integers or computing discrete logarithms.

So far no conclusive proof has been found. Most experts firmly believe that P ≠ NP, although this intuition remains without formal support. The Clay Mathematics Institute (CMI), founded in Cambridge, Massachusetts, has included it as one of the Millennium Prize problems. A definitive solution would redefine the limits of what is computable and transform fields such as artificial intelligence, game theory, cryptography, mathematical logic, and optimization systems.

The Collatz Conjecture

The Collatz conjecture is one of the most famously simple-to-state yet surprisingly resistant unsolved problems. Also known as the 3n + 1 problem, it was proposed by Lothar Collatz in 1937.

The statement is as follows: take any positive integer n. If n is even, divide it by two. If it is odd, multiply it by three and add one. Repeat the process with the new value. The conjecture claims that no matter the starting number, the sequence eventually reaches the value one.

Formally, a function f(n) is defined as:

f(n) = n/2 when n is even f(n) = 3n + 1 when n is odd

Example with n = 6: 6 → 3 → 10 → 5 → 16 → 8 → 4 → 2 → 1.

The sequence generated by successive iterations of f(n) is known as the Collatz sequence. Although it is easy to compute and has been verified for numbers as large as 2⁶⁸, it has not been proven that all sequences end in one.

The seemingly chaotic behavior of these sequences has intrigued mathematicians for decades. Despite its simplicity, current tools in number theory and discrete analysis have not been able to establish a general structure that guarantees convergence for every natural number. The difficulty lies in the absence of a known invariant or structural property that would allow control over the growth or decrease of the sequence across all possible iterations. The sequence can rise and fall unpredictably before eventually falling into the cycle 4 → 2 → 1.

The mathematician Paul Erdős remarked that “mathematics is not yet ready for such problems.” Its deceptive simplicity and resistance to analysis have made it one of the paradigmatic examples of how something that appears trivial can conceal deep and still unexplored complexity.

The Goldbach Conjecture

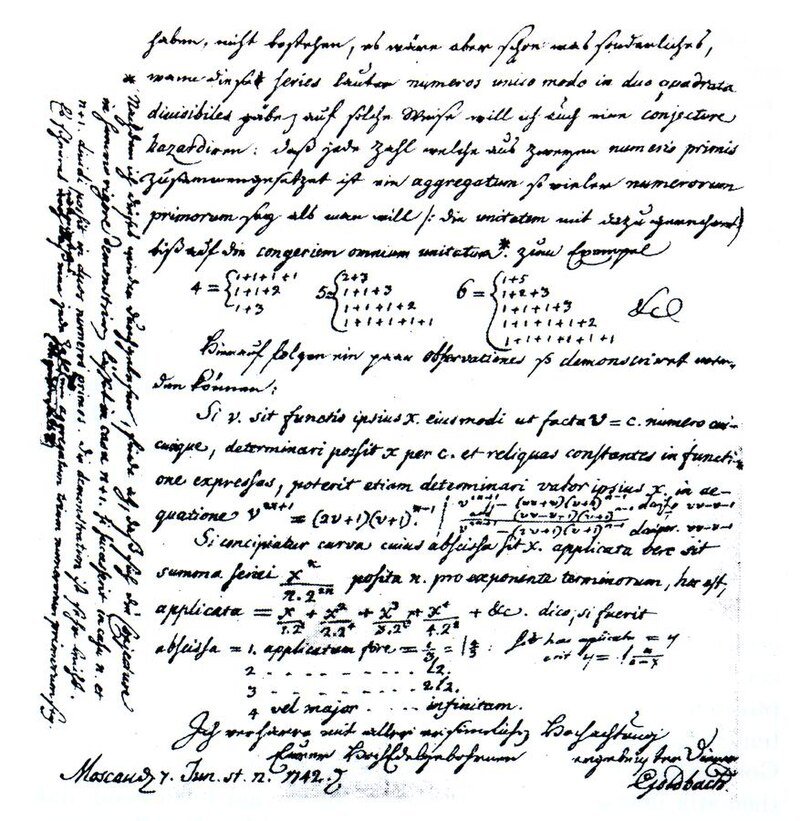

The Goldbach conjecture is one of the oldest unsolved problems in number theory. It was proposed in a letter from Christian Goldbach to Leonhard Euler in 1742. It states that every even integer greater than two can be expressed as the sum of two prime numbers. For example, 8 = 3 + 5, 20 = 7 + 13, and so on.

Formally, the statement asserts that for every even integer n greater than 2, there exist primes p and q such that n = p + q. Despite the simplicity of the statement, a general proof has not yet been found.

Numerous partial advances have been made over the centuries. In 1937, Ivan Vinogradov proved that every sufficiently large odd number can be written as the sum of three primes. Later, in 1973, Chen Jingrun showed that every sufficiently large even number is the sum of a prime and a number that is either prime or the product of two primes.

The conjecture has been verified computationally up to extremely large numbers. Today it has been confirmed for all even numbers less than 4 × 10¹⁸ without any counterexample found. Nevertheless, the absence of a theoretical proof prevents it from being considered solved.

One of the fundamental difficulties lies in the seemingly irregular distribution of prime numbers. Although probabilistic theories have been developed that suggest the plausibility of the conjecture, these are not sufficient for a formal proof. Solving it would imply significant advances in the understanding of additivity in prime numbers and would possibly open new avenues within analytic number theory and additive theory.

Further reading:

- The Clay Mathematics Institute’s official Millennium Prize problems page (CMI)

- Riemann’s original 1859 paper is available in English translation (Clay Mathematics Institute)

- Terence Tao’s work on the Collatz conjecture: “Almost all orbits of the Collatz map attain almost bounded values,” 2019 (arXiv)

- For the Goldbach conjecture, see Helfgott’s proof of the weak Goldbach conjecture: “The ternary Goldbach conjecture is true,” 2013 (arXiv)

Leave a Reply